Mercifully, we are now at the point in time where the wave of immense fantasy over ChatGPT being a nascent, omniscient entity has started to crash onto the impenetrable rocks of reality.

People have slowly began moving on from the existential “shock and awe” into the territory of more mundane considerations, such as “can I use it to make my life easier?”

The answer heavily depends on three factors.

The domain of the problem being solved.

Do you need help starting off a presentation on something you are not an expert at (say, Tensor Calculus)? No problem, ChatGPT will beautifully point you to the right direction.

The quality demands of the deliverable.

The vast majority of people are notoriously bad at creating presentations, therefore the expected performance bar you need to pass is low. ChatGPT can act like a little stool here for you to stand upon, no problem.

The expertise (or lack thereof) of the evaluators.

If you are giving a talk about Tensor Calculus to people who don’t know about that, does it really matter how robust your claims are? On the flip side, are you comfortable talking semi-nonsense when experts sit comfortably among the crowd?

In other words, if you are building a plane you are expected to be an expert at whatever it is you do and we definitely have hard expectations that the plane won’t crash for no good reason.

But what if you are a beginner in your journey for excellence, heroically making coffee for others at the office? Well, Large Language Models like GPT can definitely be helpful. And their so-called “instruct” versions make the interaction with them comfortable. So, it’s a great time to be alive.

What about using LLMs when developing software?

Is there a real benefit there?

Before we answer that, we must avoid the Wittgenstein trap of varying meanings of key concepts. Let us boldly make some steep assertions to clear up any lingering confusion.

A) LLMs do not reason.

Like, never. It certainly appears to a human that they do but they really don’t. How do we know? That’s because the recipe for making an LLM is no secret. Like the witches of old, we know exactly what is thrown into the cauldron to get the magic potion.

In a very high level approximation, LLMs are trained into knowledge by calculating a “distance” between words. Then, given some input, they guess what should come next.

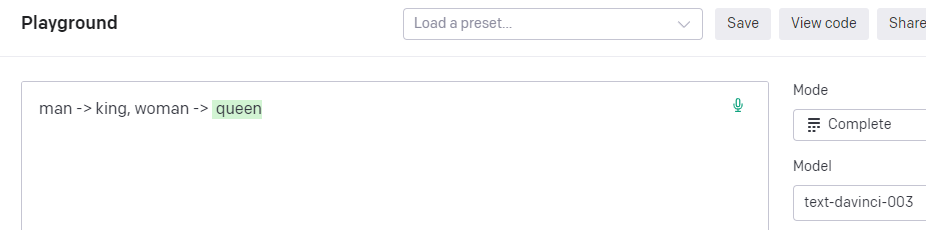

Here we told GPT-3, if “man” relates to a “king”, what does a “woman” relate to? And it gave the correct answer.

But how did it know? Put on your sci-fi glasses and imagine a space with an untold number of dimensions. And within that humongous space, there are some tiny little stars, in galaxies far far away. You have been tasked by the Emperor to map the whole universe and root out the Resistance … but I digress.

It could guess “queen” because there is a directional path between “man” and “king”. If you walk that exact same “steps” of the path but begin from the word “woman”, you will eventually arrive somewhere. The nearest word around that place is the word “queen”.

That’s how AI “understands” words. LLMs take this concept one step further:

- Download all the textual Internet and break it down to little “word fragments”.

- Spend a vast amount of money to create a Universe of stars and create a map of all the highways, roads and mountain paths between them.

- Write lyrics about the weather in the style of Snoop Dog.

How does it write those lyrics? Your prompt points it into some position on that multi-dimensional universe and then it tries to “statistically infer” (fancy speak for “guess”) what the next word to extend your input should be.

What ChatGPT (the “instruct” version of GPT) does, is that it adds a layer where the interaction between the prompt and the response is more aligned to human expectations.

A large amount of human curated Q&A has been added as training layer on top of GPT to make its output more palatable to our interaction sensitivities. And that’s great, OpenAI really hit the nail with it in terms of democratizing the use of LLMs.

All that said, note what the LLM did **not** do:

- It did not have an ontology (what things are).

- It did not have a methodology (how to investigate into the things).

- It did not have an axiology (what is the value of things).

- It did not have an epistemology (what is the nature of knowledge acquirable for the things).

Therefore, it cannot and does not reason. It is merely* trained to guess in a way that the output looks good to humans.

* I write "merely" to contrast its process with reasoning. In terms of pure technological feat, it is very very impressive.

B) LLMs don’t have a long “working” memory.

Currently, GPT-4 has a memory of about 8 thousand tokens to work with. That’s a lot for some tasks but very little for others.

The current problem with increasing memory is that its cost is quadratic. In simple terms, it can get computationally expensive (read: “pricey”) really fast. That’s one of the reasons why GPT-3, which has half the memory size, is faster than GPT-4.

There exist, of course, optimizations and tricks to move the needle further. Yet at the current state of the art, really huge windows are impractical.

And with that, we are finally ready to explore the answer to our original question.

Can it REALLY help me in my daily work creating software?

Not really, not yet – if you are a senior developer that is.

Sure, getting some small standalone functions created faster is great and all, but that’s not what you really spend most of your time on.

The biggest hurdle right now is that…

LLMs don’t have a big enough context window to consume a non-trivial code-base.

The “hack” of creating embeddings out everything and shoving them into a vector database doesn’t really fit what we need here. It doesn’t pay to “answer” questions by injecting into the prompt a few small excerpts of code found via a an external similarity search.

To be realistically useful, the LLM should have a full view of the code-base.

Software engineering requires reasoning.

If you are not working in toy projects, your responsibility is not to type random staff that compile. Your job is to think and produce solutions that add value. And we already know that’s not what LLMs do.

The time/effort it takes to refactor/correct the things an LLM throws out outweighs the benefit it provides – at least when it comes to non-trivial things.

But not all is bleak!

LLMs are great when you are learning. In this industry, nobody has learned it all and no one ever will, because tools & the knowledge domain evolve in an astonishingly fast pace. So be it a junior or a senior, you have to be a learner.

Despite LLM doing what it does best (“guessing things”), it can still act as a mediocre coach and give you examples to work with. That can accelerate the learning process because the hardest part in learning a new topic is overcoming the fog of utter ignorance, which can at times be very daunting.